Visual Effects Supervisor Ryan Tudhope produced 2,500 shots on Joseph Kosinski’s high-octane racing film, employing LiDAR-scanned track circuits, digitally re-skinned and augmented race cars, and tightly integrated practical photography to maintain realism at speed.

Building on his justly earned reputation for producing hyper-realistic, high-octane cinematic experiences, from the Light Cycles in Tron: Legacy to the U.S. Navy fighter jets in Top Gun: Maverick, filmmaker Joseph Kosinski once more feeds our “need for speed” and death-defying sensations by putting audiences in the cockpit behind the wheel of a Formula One race car in his latest film, F1: The Movie. The film, nominated for four Oscars and two VES Awards, is now streaming on Apple TV.

Part of the director’s creative inner circle was Visual Effects Supervisor Ryan Tudhope, who was responsible for producing 2,500 visual effects shots, working with Framestore, ILM, Red VFX, Lola VFX and Metaphysic, in support of the massive physical production captured by Cinematographer Claudio Miranda using special race car camera mounts constructed by Mercedes. “It was amazing to see what Claudio Miranda and the camera team did to elevate the technology from Top Gun: Maverick to this one,” states Tudhope. “Claudio customed designed these pan heads, which some other folks then engineered to make sure they handled the forces that would be put on them by the wind. The cameras were able to pan and pull focus, which were critical on some of the shots.”

The shooting methodology was influenced by how Formula One actually broadcasts races.

“Normally, F1 as a business would show up at these tracks and lay their own RF [Radio Frequency] antennae all around so they could get telemetry data from the cars,” notes Tudhope. “We had to do our own version of that. It didn’t always work. We had to spend a few days working out all the bugs and figuring out where the blind spots were. It was critical because it allowed Joe, Claudio, myself and our stunt coordinator Gary Powell, to look at what was happening and know, ‘Yes, we got that shot. We will be able to reskin that. We’ll put those cars there. This lighting is good. We need to fix the exposure.’ Because our cameras were exposed to open air, they would get covered with all kinds of bugs and oil. There was always a question of, ‘Is that good? Can we paint that out?’ With all the things that you wouldn’t be able to do if you didn’t have that live feed, you wouldn’t know until it came back and you’d review it. It sped up the process and allowed us to get a lot more footage and runs, and to know that we got what we wanted in that moment.”

As with Top Gun: Maverick, where the fighter jets were extensively reskinned, so were the Formula One race cars in order to get the most out of the live-action footage. [“Reskinning of the race cars] was 100 percent the focus of our work,” states Tudhope, “and the foundation of what Joe and I wanted to bring from the Maverick experience to this film.” Nothing beats having real footage as the basis for a shot because the digital augmentation is inherently grounded. “Rather than shooting an empty plate and putting the car in later and trying to figure out what the dynamics were going to be, it gave us what is most important, which is the camera operation looking real because it is real. But also, in the case of gravel, you’re getting all the dust and things kicking up and you’re getting good reference for what that should look like as well. This goes back to the Zero Dark Thirty days when they swapped out one helicopter for another. It has been done over and over, but it’s one of the critical techniques that we like to employ.”

Another layer of reskinning involved utilizing and altering actual Formula One race footage by taking the uncompressed PAL feed, putting it down to 25 fps, and then playing it back at 24 fps with the addition of motion of blur. The approach enabled the editorial team to select from material captured by the broadcast and production cameras. “The editor, Stephen Mirrione, might pick a shot where a McLaren was doing, but it needed to be our Apex car doing that,” remarks Tudhope. “We would swap out the McLaren for our Apex car.” Continuity was always an issue. “We were careful as we went through the races [to keep track of] what car was in what order and what tires they were on. Everything was detailed in terms of how that worked. Often times, we were reskinning cars so it was the right car in the background of a particular shot. It all made sense when you watched it with a fine-tooth comb. The reskinning used a multifold variety of techniques and reasons why we might do it.”

The front wing of the Apex car, which gets intentionally destroyed by Sonny Hayes (Brad Pitt) a number of times to cause yellow flags during races, was added digitally because of safety reasons. “It seriously impacts the performance of the car,” reveals Tudhope. “You don’t have the downforce that you’re hoping for. They didn’t want Brad Pitt or even our stunt drivers driving the cars with those wings. We used a real version for when the car pulled into the pits. It looked broken. But when the damage actually occurs, that was digital. When we would see the car driving at speed and could see the wing broken, we removed the unbroken one and added the digital one.” The CG cars went through complicated simulations to emulate what was going on in the reference footage. “Getting some of the subtle details of everything from the spoilers vibrating a little bit to seeing the suspension and camber of the tires, all played a big role [in conveying speed].”

Nothing could occur on the production that would impede actual F1 races happening on those tracks. “If we were doing some of our track laps in the middle of an actual F1 weekend, we typically weren’t able to do anything that would leave anything on the track knowing that they would have real race afterwards,” explains Tudhope. “In those situations, we relied on visual effects. There were also several moments with real crashes during the F1 season where we had coverage from all these cameras to refer to. Early in Hungary, Brad’s tire comes off. Those are real shots of a F1 car that didn’t have a tire anymore. We reskinned it to look like our Apex car. Likewise in Abu Dhabi, there’s the whole crash that brings about the red flag and pauses everything, which gives our team a chance of winning. That was a real accident which occurred in 2023 at Abu Dhabi.” Tudhope adds, “It was reskinning, having to match all of the things that were shaking and breaking so it looked like an Apex car was going through that.”

De-aging was done for the film’s flashbacks. “We worked with Metaphysic and went through a typical AI de-aging process,” Tudhope says. “What was most complicated about that was all the permissions and legalities of getting the producers’ permissions to use certain films to train the models on. We had a limited amount of work that was required. There were only a handful of shots.”

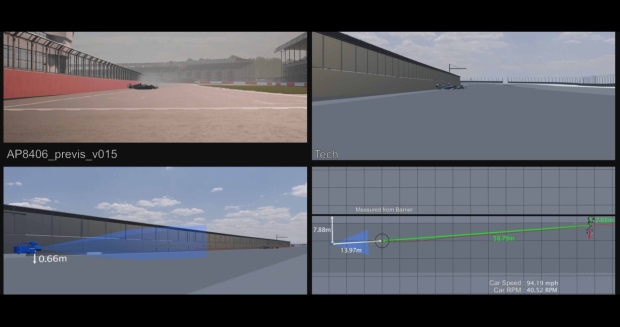

Capturing the racetracks was much more technically complex. According to Tudhope, “We worked with Clear Angle Studios to LiDAR scan the entirety of all hero races that we went to throughout the film. It took a week for them to move their LiDAR scanner all the way around these tracks and scan down to millimeter accuracy the detail of the track itself, the grandstands, and everything else around it. We also sent drones up and spiralled them around the tracks. Thirdly, we ran an array vehicle with eight cameras that followed the race line around each track with a skinny shutter [to capture still photographs]. We did that during the real races. We had people in the stands and signage up. For any given shot we took all that data to create a digital version of the world, and that reflected back into whatever we were adding digitally.”

Camera tracking was made easier by the extensive data acquisition of the racetracks. “The broadcast cameras go from 28mm to 600mm and they’re zooms, so they’re coming in and out and panning quickly,” recalls Tudhope. “There’s not a lot of reference of what’s around so we needed to provide the team with good LiDAR data so that they could track those cameras effectively. This goes back to an interesting story. When Joe was first talking with F1 about this project, he and I were chatting about putting together some test shots built upon the Maverick methodology. We got some footage of Silverstone 2022, picked five shots, and went through the process of reskinning one of the cars into an early design of the Apex car to show everyone, ‘This is how we are going to make this movie. This is the methodology we want to employ.’ And to get all the F1 teams onboard. Because we had never gone to any of those locations and didn’t have scans, it was complicated to track those cameras. We knew from that test it was something we were going to have to solve and that’s what led us to LiDAR everything for when it came around to do the actual movie. We had a test run that taught us things that ended up being a good proving ground for some of the technology we wanted to deploy.”